How Service Worker Cache Works - Does It Make Your Site Faster?

Service workers are this wonderful new web platform feature that lights up some great functionality like URL response caching. This caching is the magic that enables progressive web applications to work offline.

The reason service worker cache is awesome is it provides a persistence medium for you to keep network requests like other pages, images, scripts, CSS files, etc. in a controllable cache. Now when a network request is made it passed through the service worker where you can decide if you will return the cached response or make the network round trip.

This is not to be confused browser cache, which we have had for a long time. The main difference is developers have control over how cached assets are managed. Browser cache relies on Cache-Control header values and even then it is not a reliable persistence medium.

Plus when the consumer's phone or desktop loses Internet connectivity the web site is unavailable, even if browser cache has some assets cached.

This newer caching system replaces a previous attempt at powering offline web experiences called appCache. Don't worry about it anymore, browsers are in the process of removing support. And it never really worked the way we wanted.

Service worker cache learned from the sins of appCache to produce a low level API that allows granular control and maintains application security.

Does Service Worker Caching Really Make Your Pages Load Faster?

Service worker caching is often sold as a way to make your web pages render faster. And while that can be true there are some caveats to that story.

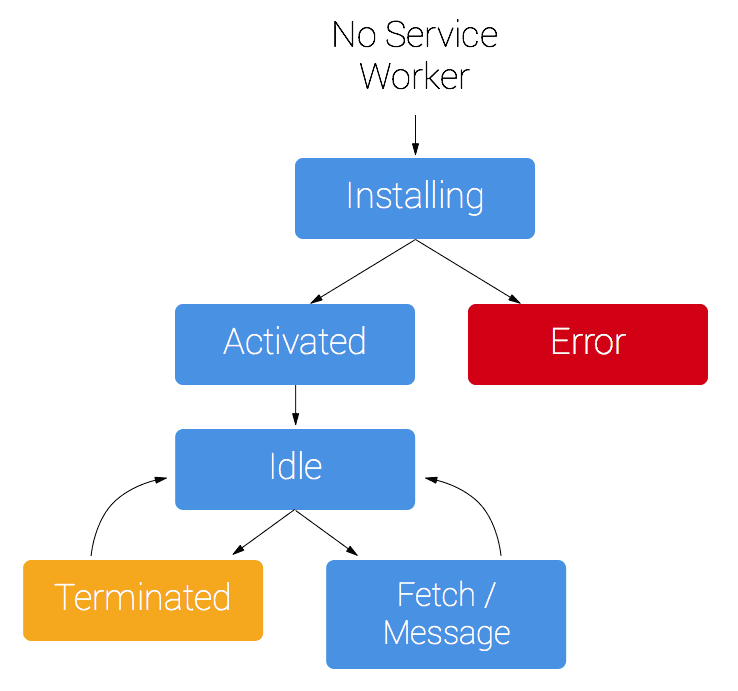

Caching only matters after the first page is loaded. Service workers honor a life cycle, where they are not active or in control when the first page of a site is loaded in the visitor's first session. You can programmatically make them active after they are registered and become active.

By default, the user must leave the site and return before a service worker works. This is by design to keep a service worker from potentially breaking the user experience.

Even if the service worker is active and you have pre-cached all a page’s responses locally it does not guarantee the page renders fast. It only means the network requests are returned from the local cache, eliminating any potential time to first byte issues you may have.

Page speed is more a factor of render time once the network responses are collected. For most pages the network accounts for about 5% of the total page speed or render time.

If you have a lot of JavaScript your page takes longer to render, the service worker won’t help you.

How Does Service Worker Caching Work?

If you don't know much about service workers that's OK, even though they have existed for close to 5 years now they only started getting mainstream attention in the past 2 years. The majority of websites have not yet implemented service workers, which means they wont work offline.

Service workers require browsers support the Cache API, which is available to both the service worker and the main or UI thread to access. The Cache API provides the methods you can programmatically use to manage how network responses are cached.

Responses are stored in the cache as a key value pair, where the key is the Request and the Response. Each one of these is an actual object, not just a JSON representation. This means you can cache anything that is requested from the Internet, including images, PDF, videos, etc.

These cached items are stored in named caches. Think about these as file cabinet drawers and each item as a folder in the drawer. I use named caches as a way to organize my cached assets to make them easier to manage.

The service worker can make a website work while the device is offline if the service worker has a fetch event handler.

self.addEventListener("fetch", event => { //manage network requests});This is your hook into the network pipeline. You can intercept all the requests, interrogate the Request object and execute your caching strategy. More on caching strategies in a second.

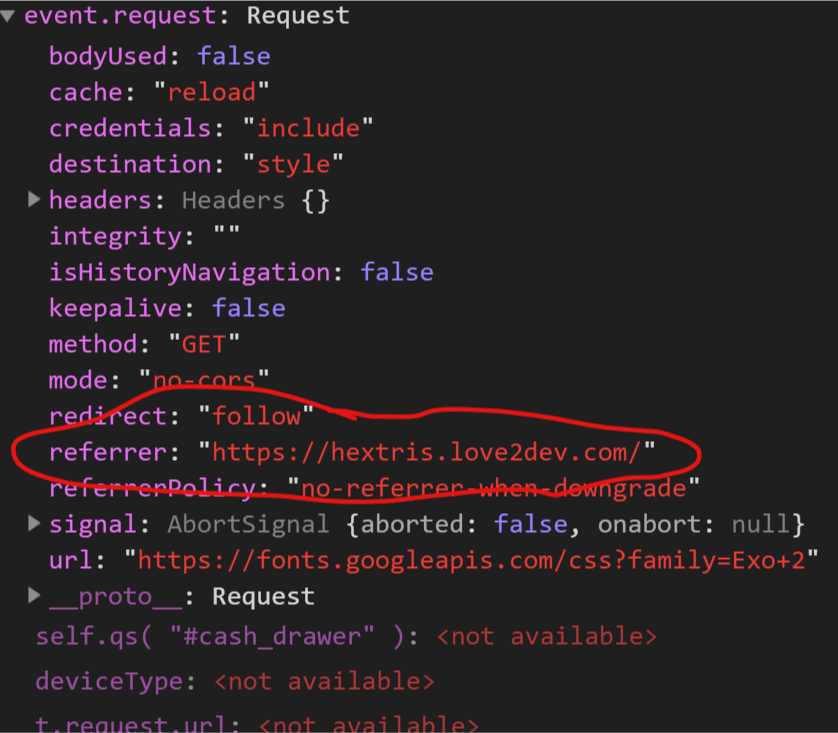

The request object is available in the fetch event handler's event parameter.

event.requestYou can check what the requested URL is, most headers and other values to help you manage how the response is returned.

I mentioned caching strategies, which is fancy way to say you can define the logic used to serve each request. You may want to always retrieve from the local cache, or always from the network.

My default strategy is checking the local cache, then making the network request is nothing is cached. When the network responds I cache the response for the next request and return a clone of the response to the main thread.

It can get very complex, which is why I wrote book about progressive web apps and spent roughly 200 pages going over service worker caching.

For a real production PWA you will want to use different caching strategies for different types of requests. You will also want to apply cache invalidation strategies, etc. This is one reason why I like to use Workbox to help with my service worker development, it abstracts a lot of gnarly out for me.

If you want to pass the request to the network you make a fetch call, supplying the request object:

return fetch(event.request);

If you want to check the network before hitting the network you check the cache for a matching cached response. If one exists and is not stale then you return it to the UI. If no valid cached response exist you go to the network to get the current response.

self.addEventListener("fetch", function(event) { event.respondWith( return caches.match(event.request) .then(function (response) { return response || fetch(event.request) .then(function(response) { cache.put(event.request, response.clone()); return response; }); }) ); });This example code is a basic service worker work flow. For real applications my logic gets much more complicated because more is typically involved. I tell students your caching strategy depends on the data or responses personality.

By that I mean each network request and response pair is unique and needs to be handled accordingly. There is not one caching strategy that fits all applications or even all requests within an application.

I have about 24 base caching strategy patterns I use. I use those as a base and stem off those for each application. This is where we as developers can have lots of fun, I mean there is lots of room for JavaScript in the service worker. Plus this JavaScript does not block the main thread from rendering content!

When is the Caching Benefit Realized?

Like I mentioned before you won’t reap the caching or offline benefits until the service worker is registered and active. This only happens after all instances of the website are closed in the browser.

You can override the default behavior by calling the skipWaiting method.

self.addEventListener( "install", event => { self.skipWaiting(); //do pre-caching stuff} );Be careful using skipWaiting because you could potentially break the user's experience. This is a potential issue if you are upgrading the service worker or web site assets. If you have a page loaded expecting version 1.0 and the new service worker removes the 1.0 responses and replaces them with version 2.0 responses it could get ugly.

I typically don't worry about that too much because I think you need to make your front-end code lean and resilient. Which is a different conversation.

Because of the way the service worker life cycle works you won’t really feel the benefits of the service worker eliminating the network requests until the 2nd visit or at least the 2nd page load during the initial session assuming skipWaiting has been called.

This means service worker caching won’t really help your organic search rankings. The Googlebot uses an instance of Chrome 42, give or take, which supports service workers, but does not exercise the pages it spiders in a way that leverages service worker caching.

The search spiders will load your site as if they had never visited before and they won’t retain your service worker registration.

Can You Disable Service Worker Caching From Specific Pages?

Yes and no.

When a service worker is registered, and you have a fetch event handler all network requests are passed through the service worker. This means any caching rules you have will be executed for all pages in the service worker's scope.

What I think you may be really seeking is can you control what URLs are cached and which ones aren't.

This is where you can create a set of rules that trigger different caching strategies based on the requested URL's pattern. For example, I often apply different caching rules to images than I do HTML pages. I also apply different rules to images loaded by different pages as well as the pages themselves.

I drive this typically using regular expressions. I allow blog posts to be cached for about 10 days and I cap the number of articles that can be cached. I also apply different invalidation rules to the blog images than I do to my site's logo.

API calls are often a different breed. Many times, these requests are made to get fresh data and require different time to live rules. I also avoid caching POST (like form submissions) responses.

If you really want to enforce rules based on the page in loaded in the browser, you might be able to do that. A quick and dirty solution would be to append an extra query string parameter you can catch in the service worker.

You do have access to the request referrer value. This is what page initiated the request. So you could configure your fetch event handler logic to look for certain pages and execute a series of caching logic different from other pages. In this case you could make it bypass cache all together and go straight to the network.

Wrapping it Up

Service worker caching is amazing. It enables web sites to run offline and shave some time of the full-page load time. But it is not necessarily the panacea many think it is.

You won't reap organic search benefits for faster load times since the service worker cache won’t be used by the Googlebot. Visitors won’t experience the difference the first time they visit your site either.

Service workers are not active until the 2nd visit or page load if you use the skipWaiting call.

This does not mean you should not bother with using a service worker to enhance your application's capabilities. They can provide enhanced values, especially when it comes to reducing the amount of network chatter and providing a smooth offline experience.

Because most of the page rendering cycle leans on how much JavaScript, CSS and images are required just retrieving them from the local cache wont speed things up tremendously. Well unless you have taken the time to optimize your page's speed by optimizing the resources required to render the page.