Bing's URL & Content Submission API Can Instantly Index Your Content

Do you want your new and updated web pages instantly indexed by search engines?

I know I do!

The standard indexing workflow requires search engines to discover fresh content. They 'poll' your site content or discover a link from another page.

This can lead to a delay of days or weeks before new content is crawled to be included in search results.

Microsoft has changed that process and now allows you to tell them when new content is ready to crawl.

At the 2019 SMX West conference the Microsoft Bing Team announced they would be offering a notification API. The URL Submission and Content API is now official.

The service is a new way to submit a URL or batch of URLs to Bing.

Bing will stop spidering your pages without a prompt from the site. This puts the responsibility to trigger the Bingbot to visit a page on the page or site owner, not Microsoft to submit URLs to Bing.

Sites can earn trust so they can submit up to 10,000 URLs per day.

The initial announcement sent some ripples through the search community. The move signals a large disruption to the way search engines function.

Many wondered how Bing could work without discovering new content. For now, traditional crawling will continue. My understanding is submitted content should have a priority for crawling and indexing.

There are no public signals if Google will match this service. So for now you will need to rely on casual visits from the GoogleBot.

I applaud the move. Not only does this benefit serious online marketers. It also reduces the amount of cloud/computing resources needed to find updated content. This should free Microsoft to improve other aspects of the search service.

The good news is site owners can automate submission. This is why I created the Ping Bing nodejs Module. It makes submitting updated URLs from nodejs based rendering pipelines easy.

How will this change the way SEO works? And why this is a great disruption to the search optimization world?

How Bing Will Spider Content

Traditionally search spiders have aggressively felt their way through the maze of links connecting pages together on the web. That is why we call them spiders.

This means companies like Google, Bing, DuckDuckGo and others need little software agents that operate at a massive scale to consume every page published on the Internet. These agents are called bots, but they are just the first pass to the entire search ranking workflow.

Bots just find URLs can consume the content. They are not responsible for evaluation, that is handing off to a different process. Ultimate the content is processed, scored and we eventually see results for search queries.

Bots consume a lot of resources, both on the search engine side and your web server, plus bandwidth between the two points.

I know from evaluating logs from sites over the years and looking at reports in the Google and Bing search consoles there is a lot of activity for many sites.

We know there are over 2 billion public websites today, which along means there are many pages these spiders must check everything out.

But here's the thing, most URLs never change. This means spidering each one, even once a month means a lot of wasted effort for everyone.

What Bing has decided to do it change to a passive spidering process where they will check out your pages when you tell them to do so.

Now instead of Bing wasting time and resources spidering the same page with the same content a few dozen times a year it will only spider the URL when the owner notifies the search engine to do so.

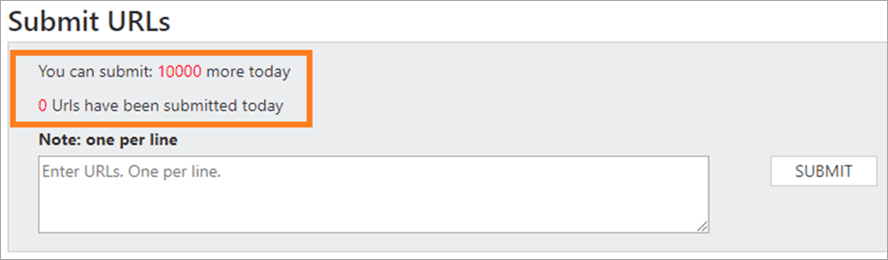

To notify Bing there are two ways to let them know, the manual submission tool through the Bing Webmaster Tools portal or through the Bing API.

While mannually updating through the portal will work for a small site, it will not scale for larger sites. This is why API integration is important.

While there are ways to notify both Google and Bing when a sitemap or RSS feed updates, using an API to explicitly notify when a page updates is much better.

The ideal scenario will have your publishing workflow automatically notify all search engines about new content or updates.

URL Submission Limit and Earning Trust

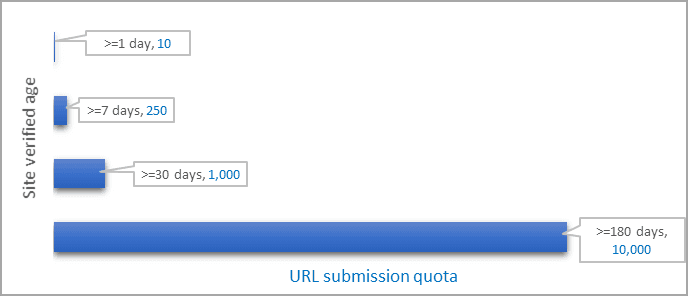

Don't think you can just go an notify Bing your entire 100,000 URL website has updated all at once. They impose limits, which start small and increase the longer a site is verified with the Bing WebMaster tools.

After 6 months a site can submit up to 10,000 URLs per day, way more than almost every website could want, even large news sites with a million articles or an e-commerce site might need.

There are only a handful of sites that have 10,000 daily page updates. I think Amazon might be an example of a site needing more than the 10,000 per day limit and I am sure they have an arrangement with both Microsoft and Google.

The rate limits work like this:

- 1 Day = 1 URLs

- After 1 Week you can submit up to 250

- After 1 Month the limit increases to 1000

- After 6 months you max out at 10,000 changes per day

Don't think this gives you liberty to resubmit your entire site every day to trigger a the bot to visit. While not included in the Bing blog I am sure they will begin to ignore repeated requests for pages that show little sign of change.

The goal for Microsoft is to reduce the amount of resources needed on both ends. You still will have the bot visit and evaluate your content, just more efficiently.

Benefits for Business and Online Marketing

Some of the online reactions to this policy change are predictable. But I see it as a positive move.

The obvious benefit is less computing power required to index the web.

But it also means the quality of content indexed should be better.

Think about it, only sites that take the time to verify with the search engine will have the opportunity to have their pages indexed.

And only sites with a built in mechanism or an owner that takes the time to manually notify the search engine of an update will be indexed.

This means mountains of thin and weak content will simply be ignored. This reduces the chance thin and obsolete content will clutter search results.

It also means sites making an intentional effort to rank for keywords will be able to rank a little easier.

This is why I think this will be a positive disruption to the organic search marketing process.

Real Proof

Having an API is one thing, but how fast does it work? And can you see real results?

The answers are super-fast and absolutely.

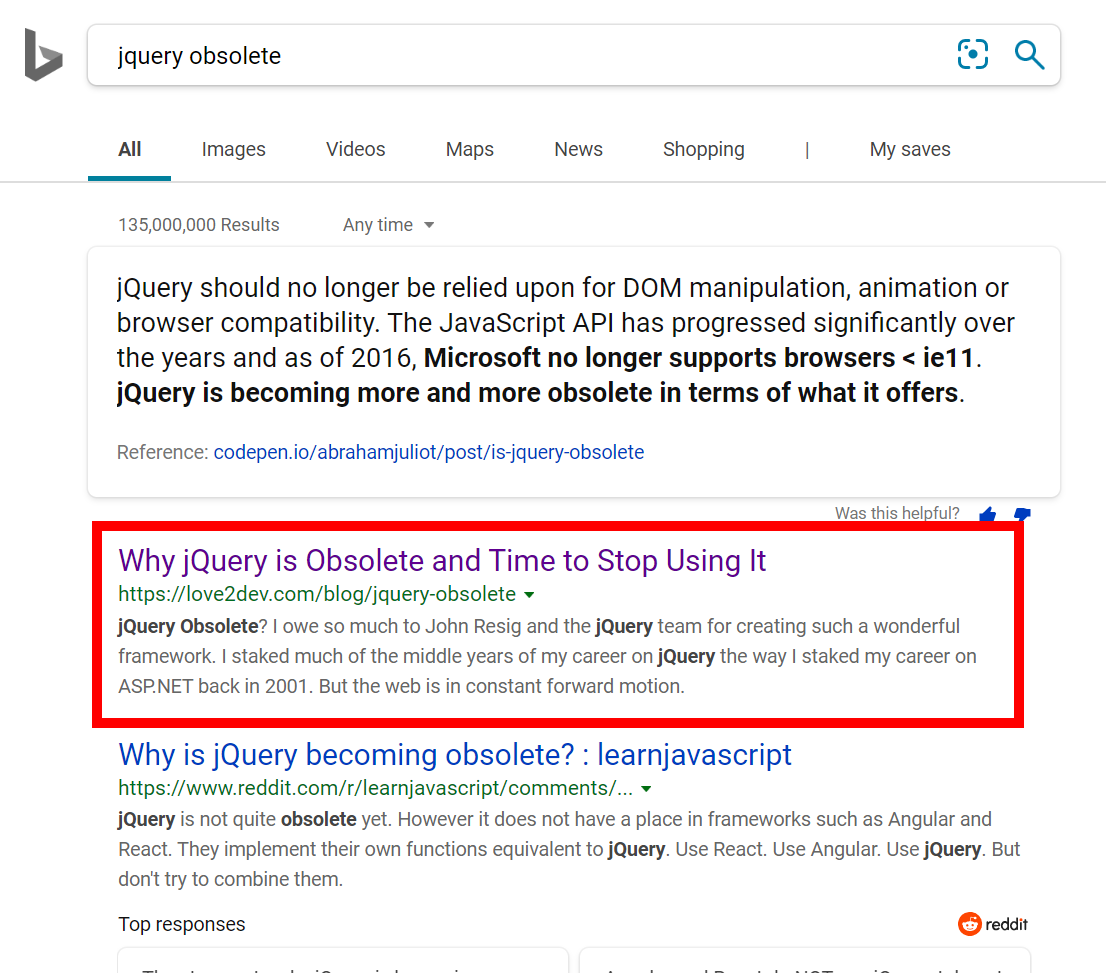

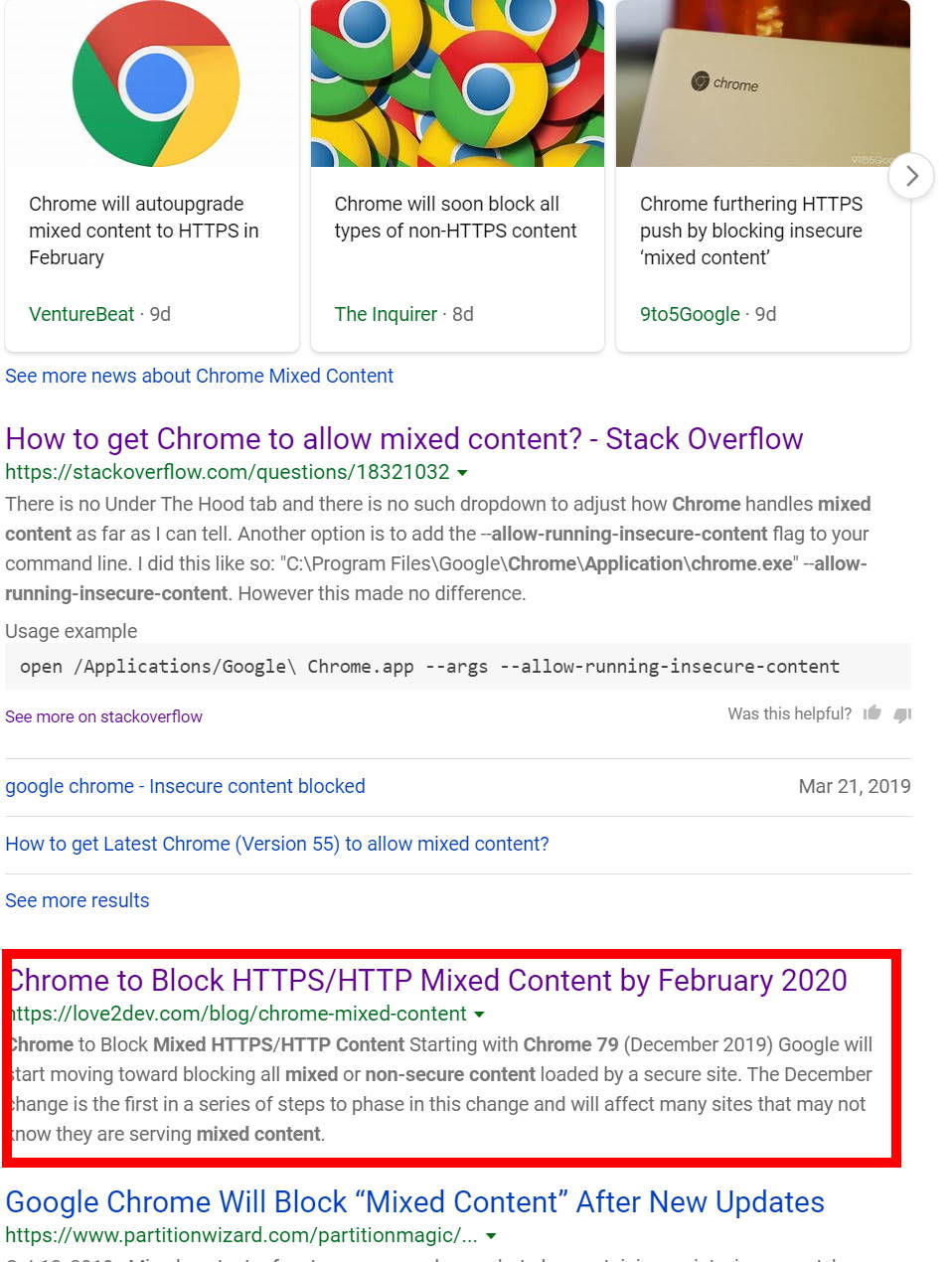

The easiest way to test features like this is to publish content targeting long tail, low competition search terms. Higher competition terms will be more difficult to isolate success just due to the established SERPs.

This is what I have done, several times over the past few months.

When the API was first announced I integrated it into my publishing workflow. The first article I tried was indexed within 45 minutes. I know 45 minutes because that is when I remembered to go check.

Lately I have been more curious to see how fast a result would hit page 1 for a targeted keyword.

I refreshed some old content and published to a new URL recently. I checked 10 minutes later and was in position 1, that's right the top position, for the primary target search term.

The next day I thought, let's really see how fast it can work. I published a new article and in less than 2 minutes, or 120 seconds, it was at #2 for the primary term.

There are many factors at play to being #2 that fast. Some have to do with my site being established in certain niches and having trust with the search engine. Plus, my pages generally have their technical house in order, and I structured the articles for good SEO, etc.

I know how to create content to rank. Since these were low competition terms I had no trouble earning a high placement fast.

The main take away is the Bing URL submission API process works fast, much faster than Google's manual process.

Automating Submission

Since the Bing URL submission API is available for any language or platform you cna integrated it into your publishing workflow.

Since our sites use an AWS Lambda serverless workflow using nodejs I created the ping_bing module. It makes adding the Bing URL submission API easy for your nodejs applications.

Summary

While Microsoft Bing only commands a small percentage of the online search market the recent spidering announcement may be a key part of the search engine market future.

If this works and I think it will, I see Google and other search engines adopting a similar policy.

In the end everyone who cares will win. Search companies can use their resources to improve their service offerings and site owners can reduce their hosting bills.

But the big win will go to online marketers because weak content will become ignored, reducing the noise currently crowding search results.